How Netflix uses A/B Testing to keep you watching?

Imagine this - You open Netflix, scroll through your recommendations, and a striking movie thumbnail catches your eye. Without thinking twice, you click on it. But did you know that Netflix has tested multiple versions of that thumbnail before deciding which one to show you?

A/B testing is a data-driven approach that companies like Netflix, Google, and Amazon use to optimize user experiences. Today, we will dive into the fundamental princiles of A/B Testing, examine its connection to hypothesis testing, and analyze a real-world case study from Netflix to illustrate its practical application.

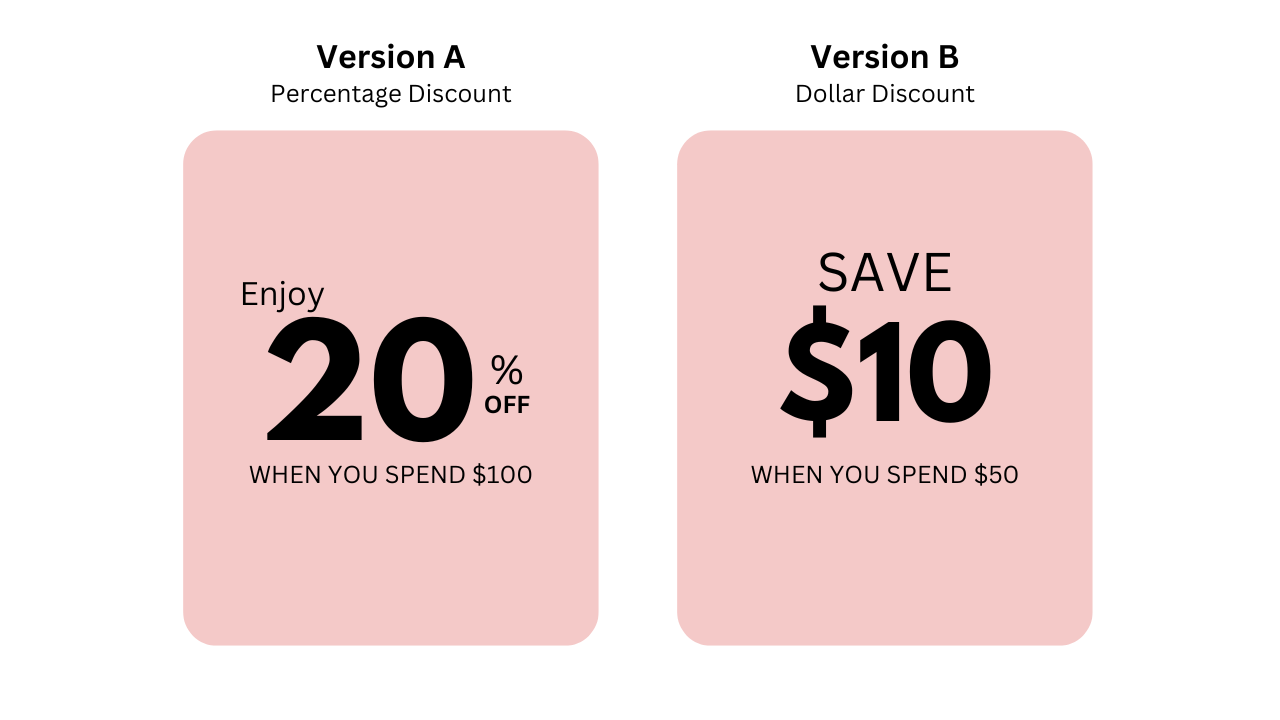

A/B testing is an experiment where two versions of something, like an app feature, a webpage, an ad, etc. are tested against each other to see which performs better.

- Version A: The existing version

- Version B: The modified version

Users are randomly split into two groups: one sees Version A, while the other sees Version B. Their behavior is then analyzed to determine which version leads to better results.

A/B testing is built on Hypothesis testing. Before running an A/B test, we define two hypotheses:

- Null Hypothesis (H₀): There is no difference between Version A and Version B.

- Alternative Hypothesis (H₁): There is a significant difference between Version A and Version B.

To gain a deeper understanding of A/B testing and how it can optimize user engagement, let’s analyze a hypothetical case study on how Netflix could experiment with different thumbnails for Harry Potter to maximize viewer interest.

-

Increase click-through rate (CTR) on the Harry Potter movie page.

-

Maximize watch time by encouraging users to start and continue watching.

- H₀: Null Hypothesis: Changing the thumbnail does not affect the click-through rate (CTR).

- H₁: Alternative Hypothesis: A different thumbnail will lead to a higher CTR.

Before running the test, Netflix has to estimate the number of users required to detect a meaningful difference.

Test duration: The experiment must run long enough to capture user behavior.

Suppose Netflix runs the test for 3 weeks, ensuring each variation gets at least 100,000 views for statistical confidence.

Each group of users only see one version, and Netflix would track which thumbnail leads to more clicks and longer watch times.

-

Group 1 → Sees thumbnail A

-

Group 2 → Sees thumbnail B

-

Group 3 → Sees thumbnail C

After a few weeks of testing, suppose that the data gained:

- Version A → 15% higher CTR.

- Version B → 10% higher CTR.

- Version A → No significant increase.

Based on the campaign result, Netflix could prioritize Version A to maximize the click-through rate.